How to Download Them All

One of the primary jobs as a Data Archivist at DataMeet is to download and archive the data from the internet. Mostly from government websites. I usually use python scripts to download, scrape and clean the data. But sometimes, I just need to download many files and store them. I could still use python, but its an overkill. So here are some of the methods that I use.

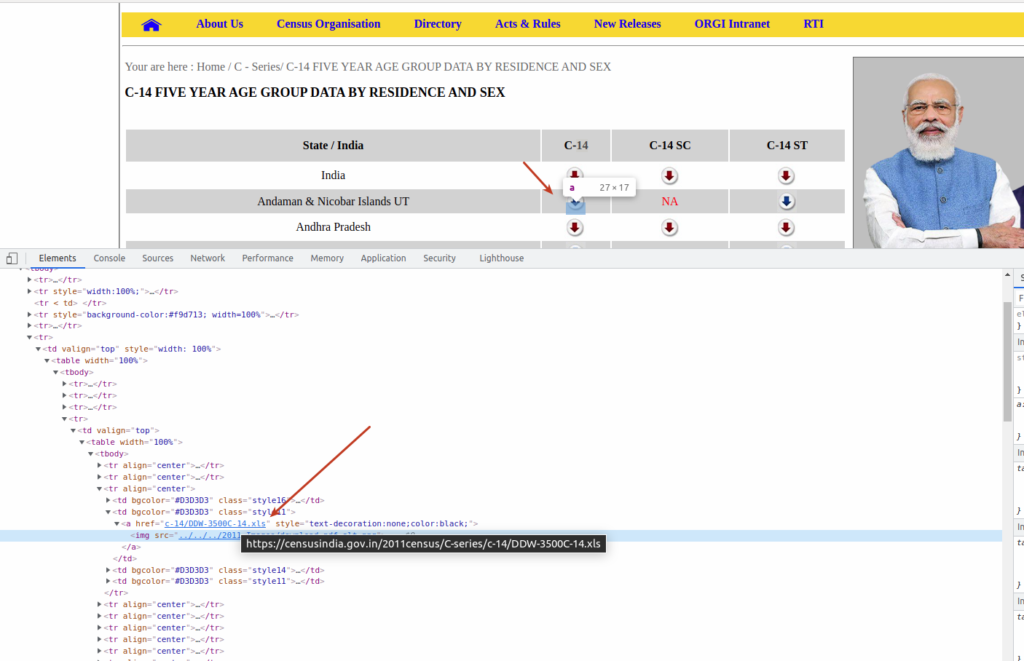

Recently I had to download Census of India/ 2011, Series C, C-14 FIVE YEAR AGE GROUP DATA BY RESIDENCE AND SEX data. Fortunately, the data is available in .xls format and is linked from a web page. I can manually click on each link and download it or use some automation to download them all. I would like to go with automation, which includes two steps. 1. Get all the links, and 2. Download and save them. So let’ see how you can do that. Of course, this is just an example; you could use similar logic in other places.

Table of Contents

Identify what to download

Open inspector in the Chrome or Firefox browser to identify the links and pattern. In this case, download icons are just Anchor tag links to .xls files. So we can get the anchor tags, and the href attribute will have the actual link.

Identify a pattern in the links so can filter them. For example in this case, the links we are interested end with .xls or contain c-14/DDW. We will use this pattern in the tools below.

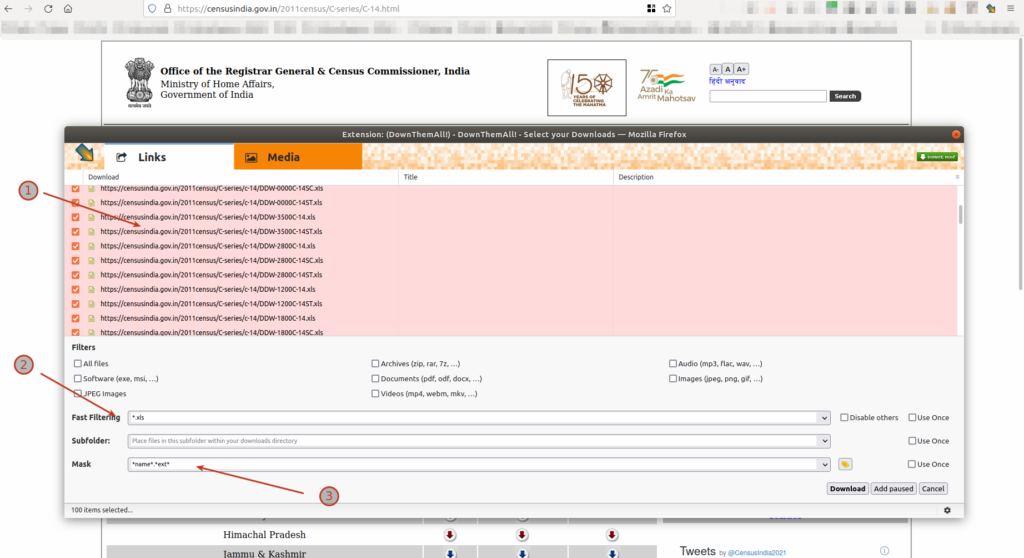

Using DownloadThemAll Addon

DownloadThemAll! Firefox addon was one of the best addons for this purpose. It used to have a lot of functions, but once it migrated to the current WebExtension standard, it has lost some functionalities. But it is still the best out there and very friendly to non-programmers. It allows you to filter the URLs based on a pattern, gives the ability to rename the files once downloaded, and batch them for download.

What we can do and did do is bring the mass selection, organizing (renaming masks, etc) and queueing tools of DownThemAll! over to the WebExtension, so you can easily queue up hundreds or thousands files at once without the downloads going up in flames because the browser tried to download them all at once.

DownloadThemAll

Using CLI – List Files

List down the files using Xidel. I use the xpath to filter the anchor tags whose href attribute contains the string “c-14/DDW“. I could have also used “.xls“. There are many ways to filter once you know the pattern of URLs.

xidel https://censusindia.gov.in/2011census/C-series/C-14.html \

--xpath3="//a[contains(@href,'c-14/DDW')]/concat('https://censusindia.gov.in/2011census/C-series/',@href)" \

--silent

This will return the list of xls files that are needed to be downloaded. A sample output of the above command is

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0000C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0000C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0000C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3500C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3500C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2800C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2800C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2800C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1200C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1200C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1800C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1800C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1800C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1000C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1000C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1000C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0400C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0400C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2200C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2200C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2200C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2600C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2600C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2600C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2500C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2500C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2500C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0700C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0700C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3000C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3000C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3000C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2400C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2400C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2400C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0600C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0600C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0200C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0200C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0200C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0100C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0100C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0100C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2000C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2000C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2000C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2900C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2900C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2900C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3200C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3200C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3200C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3100C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3100C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2300C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2300C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2300C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2700C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2700C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2700C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1400C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1400C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1400C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1700C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1700C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1700C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1500C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1500C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1500C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1300C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1300C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2100C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2100C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-2100C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3400C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3400C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3400C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0300C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0300C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0800C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0800C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0800C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1100C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1100C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1100C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3300C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3300C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-3300C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1600C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1600C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1600C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0900C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0900C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0900C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0500C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0500C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-0500C-14ST.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1900C-14.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1900C-14SC.xls

https://censusindia.gov.in/2011census/C-series/c-14/DDW-1900C-14ST.xls

Using CLI – Download using Wget

Once you list and filter using xidel, you can download them all using wget.

GNU Wget is a free software package for retrieving files using HTTP, HTTPS, FTP and FTPS, the most widely used Internet protocols. It is a non-interactive commandline tool, so it may easily be called from scripts,

Wgetcronjobs, terminals without X-Windows support, etc.

xidel https://censusindia.gov.in/2011census/C-series/C-14.html \

--xpath3="//a[contains(@href,'c-14/DDW')]/concat('https://censusindia.gov.in/2011census/C-series/',@href)" \

--silent | wget -i -

Using CLI – Download using Parallel and Wget

You can use GNU Parallel to download in parallel. Here -j specifies the number parallel downloads.

GNU parallel is a shell tool for executing jobs in parallel using one or more computers. A job can be a single command or a small script that has to be run for each of the lines in the input. The typical input is a list of files, a list of hosts, a list of users, a list of URLs, or a list of tables. A job can also be a command that reads from a pipe. GNU parallel can then split the input and pipe it into commands in parallel.

GNU Parallel

xidel https://censusindia.gov.in/2011census/C-series/C-14.html \

--xpath3="//a[contains(@href,'c-14/DDW')]/concat('https://censusindia.gov.in/2011census/C-series/',@href)" \

--silent | parallel --bar -j 3 wget -nv {}

Using CLI – Download using Aria2

Aria2 is one of the best tools to download files from internet. So you can use the command line version of it aria2c to download instead of wget. Here -j specifies the number of parallel downloads for every file.

aria2 is a lightweight multi-protocol & multi-source command-line download utility. It supports HTTP/HTTPS, FTP, SFTP, BitTorrent and Metalink. aria2 can be manipulated via built-in JSON-RPC and XML-RPC interfaces.

aria2

xidel https://censusindia.gov.in/2011census/C-series/C-14.html \

--xpath3="//a[contains(@href,'c-14/DDW')]/concat('https://censusindia.gov.in/2011census/C-series/',@href)" \

--silent | aria2c -j 3 -i -

So that’s how I download things my friends. I hope this helps you to download and archive things as well.